Here’s a thread from a little while back in which I outline my critique of the (theological) assumptions implicit in much casual thinking about artificial intelligence, and indeed, intelligence as such.

Another late night thought, this time on Artificial General Intelligence (AGI): if you approach AGI research as if you’re trying to find algorithm to immanentize the eschaton, then you will be either disappointed or deluded.

There are a bunch of tacit assumptions regarding the nature of computation that tend to distort the way we think about what it means to solve certain problems computationally, and thus what it would be to create a computational system that could solve problems more generally.

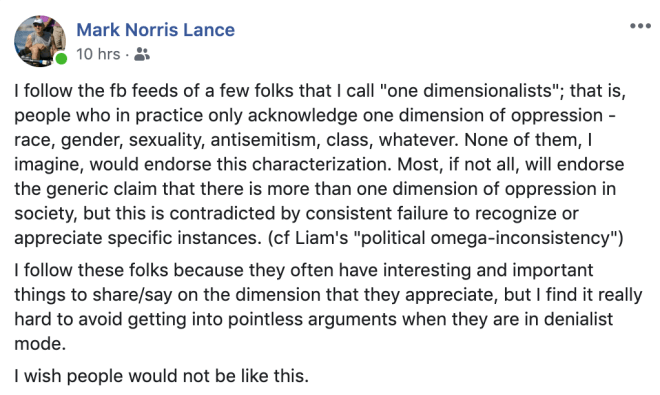

There are plenty of people who have already pointed out the theological valence of the conclusions reached on the basis of these assumptions (e.g., the singularity, Roko’s Basilisk, etc.); but these criticisms are low hanging fruit, most often picked by casual anti-tech hacks.

Diagnosing the assumptions themselves is much harder. One can point to moments in which they became explicit (e.g., Leibniz, Hilbert, etc.), and thereby either influential, refuted, or both; but it is harder to describe the illusion of coherence that binds them together.

This illusion is essentially related to that which I complained about in my thread about moral logic a few days ago: the idea that there is always an optimal solution to any problem, even if we cannot find it; whereas, in truth, perfectibility is a vanishingly rare thing.

Using the term ‘perfectibility’ makes the connection to theology much clearer, insofar as it is precisely this that forms the analogical bridge between creator and created in the Christian tradition. Divinity is always conceptually liminal, and perfection is a popular limit.

If you’re looking for a reference here, look at the dialectical evolution of the transcendentals (e.g., unum, bonum, verum, etc.) from Augustine and Anselm to Aquinas and Duns Scotus. The universality of perfectible attributes in creation is the key to the singularity of God.

This illusion of universal perfectibility is the theological foundation of the illusion of computational omnipotence.

We have consistently overestimated what computation is capable of throughout history, whether computation was seen as an algorithmic method executed by humans, or a process of automated deduction realised by a machine. The fictional record is crystal clear on this point.

Instead of imagining machines that can do a task better than we can, we imagine machines that can do it in the best possible way. When we ask why, the answer is invariably some variant upon: it is a machine and therefore must be infallible.

This is absurd enough in certain specific cases: what could a ‘best possible poem’ even be? There is no well-ordering of all possible poems, only ever a complex partial order whose rankings unravel as the many purposes of poetry diverge from one another.

However, the deep, and seemingly coherent computational illusion is that there is not just a best solution to every problem, but that there is a best way of finding such bests in every circumstance. This implicitly equates true AGI with the Godhead.

One response to this accusation is to say: ‘Of course, we cannot achieve this meta-optimum, but we can approximate it.’

Compare: ‘We cannot reach the largest prime number, we can still approximate it’

This is how you trade disappointment for delusion.

There are some quite sophisticated mathematical delusions out there. But they are still illusions. There is no way to cheat your way to computational omnipotence. There is nothing but strategy all the way down.

This is not to say that there aren’t better/worse strategies, or that we can’t say some useful and perhaps even universal things about how you tell one from the other. Historically, proofs that we cannot fulfil our deductive ambitions lead to better ambitions and better tools.

The computational illusion, or the true Mythos of Logos, amounts to the idea that one can somehow brute force reality. There is more than a mere analogy here, if you believe Scott Aaronson’s claims about learning and cryptography (I’m inclined to).

It continually surprises me just how many people, including those involved in professional AGI research still approach things in this way. It looks as if, in these cases, the engineering perspective (optimality) has overridden the logical one (incompleteness).

I’ve said it before, and I’ll say it again: you cannot brute force mathematical discovery; there is no algorithm that could progressively search the space of possible theorems. If this does not work in the mathematical world, why would we expect it to work in the physical one?

For additional suggestive material on this and related problems, consider: the problem of induction, Godel’s incompleteness theorems, and the halting problem.

Anyway, to conclude: we will someday make things that are smarter than us in every way, but the intermediate stages involve things smarter than us in some ways. We will not cross this intelligence threshold by merely adding more computing power.

However it happens, it will not be because of an exponential process of self-improvement that we have accidentally stumbled upon. Self-improvement is not homogeneous, or without autocatalytic instabilities. Humans are self-improving systems, and we are clearly not gods.